Azure Onboarding Guide for IT Organizations

Introduction

There are a lot of good reasons for enterprises to move to the cloud, such as greater business agility, keeping track with the speed of innovation, and cost savings. The current state of the various cloud surveys shows that cloud adoption is growing and has now hit its stride. The strong growth in the use of cloud means that the majority of organizations are now operating in a hybrid environment that consists of on-premises and cloud-based services.

The cloud is also changing how companies consume technology. Employees and business departments are more empowered than ever before to find and use cloud applications, often with limited or no involvement from the IT department, creating what's called "shadow IT." Despite the benefits of cloud computing, companies face numerous challenges including the integration of cloud services into the enterprise architecture, security and compliance of corporate data, managing employee-led cloud usage, establishing operational processes for cloud services, and even the development of necessary skills needed in the cloud era.

As companies move data to the cloud, IT departments are looking to put in place policies and processes so that employees and business departments can take advantage of cloud services that drive business growth without compromising the security, compliance, and governance of corporate data.

The purpose of this document is to provide an overview, guidance, and best practices for enterprise IT departments to introduce, consume, and manage Microsoft Azure-based services within their organization. The target audience is enterprise architects, cloud architects, system architects, and IT managers.

This document is not intended to replace existing documentation about Microsoft Azure services and features.

Moving to the cloud

The evolution and maturation of cloud technologies have brought enterprise IT into a transitional stage. A 2015 EDUCAUSE study found that CIOs expect a significant shift in focus in the next five years, away from managing primarily infrastructure and toward the cloud.

But why is cloud technology so irresistible? Why is making the migration such a good idea for businesses? Answering this question for your business before you make the move is essential. There are no two ways about it: Your business will move to the cloud, and making that move is a good idea. But your success hinges on your reasoning for making the move. There are many different opportunities to migrate to the cloud, each of which may have a different reason behind it, and it's critical that you identify each of these reasons.

According to a study from Accenture (Behind Every Cloud, There's a Reason), there are six most common business and technology drivers for making the move to the cloud, including how to identify these drivers, how to identify the right drivers for your program, and how to define your drivers. Properly identifying, defining, and balancing these drivers can help your business successfully execute its cloud strategy and move toward a true transformation.

The six drivers for making the move to the cloud fall under two main categories: business drivers, including business growth, efficiency, and experience, and technology drivers, including agility, cost, and assurance.

- Business growth

What are you doing to make your company more successful from the perspective of expansion? This can take a number of different forms (for example, driving sales, enlarging wallet share, or increasing productivity), so it's important to clearly define how you intend to achieve this growth. - Efficiency

Efficiency includes streamlining processes to get work done faster or with less resources. This can turn around and fuel growth (for example, by allowing you take on more work) or reduce costs (for example, by reducing the amount of resources required). - Experience

Whether it's external or internal, the customer experience is of utmost importance in today's world. A good experience can increase brand loyalty among customers and retention among employees. In general, a positive customer experience is strongly tied to brand value. - Agility

Agility is the most common cloud driver today, especially when IT is leading the charge. Being agile helps IT change and scale fast enough to keep up with business needs. - Cost

The difference between the technology driver cost and the business driver efficiency is often misunderstood. Although efficiency can lead to cost savings, the cost driver focuses on reducing the cost of IT licenses or operations and/or redefining the cost model for technology solutions. - Assurance

Finally, assurance encompasses the achievement of mission-critical technology outcomes, such as protecting against datacenter crashes or security breaches and maximizing disaster recovery effectiveness. Going to the cloud for assurance frees IT to be more strategic and passes these responsibilities to a provider that is typically better at handling them than your business is.

As you begin your move to the cloud, it's important to identify which of these factors is driving your journey. Doing so should help inform your next steps and justify making the move.

Adaptation of the IT organization

The effective adoption of cloud services requires changes to an organization's existing operational practices and procedures (see EDUCAUSE Preparing the IT Organization for theCloud). The external nature of cloud services may require an organization to rethink its IT service management and disaster recovery practices, as well as how given cloud services integrate with its existing in-house technology infrastructure. The pay-as-you-go cost model common with cloud services may entail changes to financial management practices and total cost of ownership calculations. Procurement processes may need to be adjusted to increase agility and effectively address the unique risks associated with cloud service, and new vendor management roles may need to be established and resourced to ensure ongoing compliance with contract terms.

Transforming the IT organization

Cloud strategy development is an evolutionary process in most enterprises. Adopting a cloud strategy requires careful coordination among a variety of stakeholders, including IT and information security staff, legal teams, compliance experts, procurement specialists, and institutional leadership. Once an enterprise cloud strategy is adopted, the implementation of those strategies requires transformation in the IT organization. Some common approaches and stages to developing an enterprise-wide cloud strategy include:

- Cloud aware

Enterprise users and IT staff are aware of broad cloud trends but are not yet prepared to adopt public-cloud solutions. These institutions may choose to build on-premises solutions in a way that prepares them for an eventual move to the cloud. - Cloud experimentation

The IT organization begins to learn about the various cloud services available to them in the forms of SaaS, PaaS, and IaaS. The organization may begin deploying some common SaaS solutions (such as Office 365), which sometimes grows into testing IaaS deployments. - Opportunistic cloud

The IT organization begins to actively seek out cloud solutions that meet new business requirements. Services may remain as traditional on-premises deployments, but cloud solutions are considered and deployed when reliability, scalability, or other benefits are perceived. - Cloud first

This strategy places cloud at the top of the decision-making chain. The default assumption within the enterprise is that cloud services will fulfil the majority of the enterprise computing needs.

The adoption of the various cloud strategies causes a paradigm shift that impacts both the IT organization and IT staff members. Business units are increasingly driving the selection and adoption of cloud IT solutions, and in doing so they may bypass the IT units. Enterprises will achieve the best outcomes when their IT organizations serve as enablers, simplifying and accelerating business units' adoption of cloud services. In order to evolve into the role of cloud enabler, IT units should carefully consider the value they bring with regard to cloud service adoption, such as:

- Establish strategy and goals

- Define criteria for moving to or starting applications in the cloud

- Architect core infrastructure components for cloud integration: Identity, Networking, Security

- Acquire cloud development skills

- Retool for adoption and change management

- Take a systematic and disciplined approach to security and compliance

Now more than ever, IT units must clearly understand the evolving needs of business and units and be prepared to help them assess the full range of possible solutions to their technology needs. Successful IT organizations will find ways to simplify and accelerate cloud adoption by reducing the barriers their partners face and by helping their company avoid potential pitfalls.

IT organizations must develop competencies with cloud technologies and services even as those services evolve and change. Practically, this means that staff must have time to explore new technologies and that organizations may need to increase their investment in IT staff training. IT organizations that fail to provide sufficient time for training and exploration will likely find themselves unable to contribute meaningfully to the campus technology conversation. Business units will not wait. They will simply bypass IT organizations unable to meet their needs. Change management practices and IT governance processes need to become agile and rapidly responsive to the needs of users, while still assessing risks to the organization.

Adopting the cloud

In any transformative change, it is important to understand what the destination is and what the waypoints along the journey will be. There are multiple potential destinations for any application, and IT cloud deployments will be a mixture of them (hybrid cloud). Hybrid cloud uses compute or storage resources on your on-premises network and in the cloud. You can use hybrid cloud as a path to migrate your business and its IT needs to the cloud or integrate cloud platforms and services with your existing on-premises infrastructure as part of your overall IT strategy.

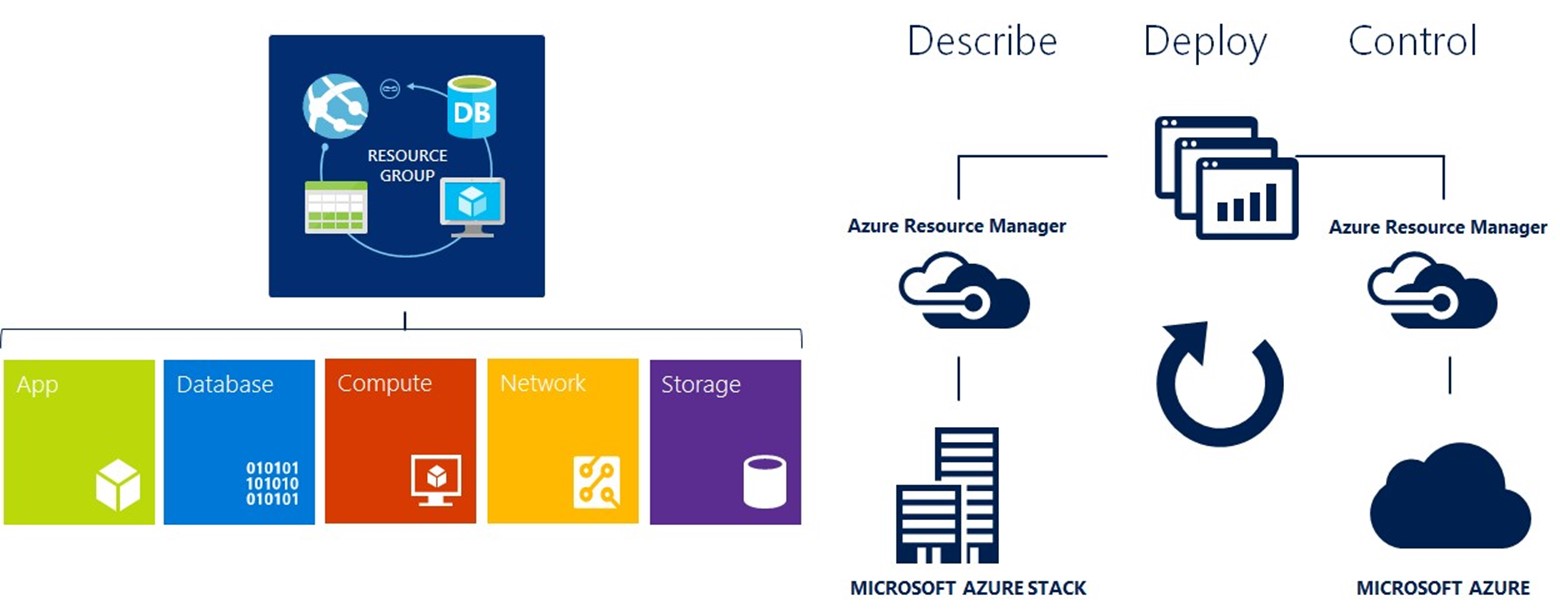

- Private Cloud

Private Cloud technologies are hosted in an on-premises datacenter or in a datacenter of a managed service provider. This might be necessary for certain applications or data that can't be moved to the cloud. Private Clouds are especially useful if they implement a technology stack that is consistent with the Public Cloud. Microsoft Azure Stack is a product that enables organizations to deliver Azure services from their own datacenter. It helps you build and deploy your applications the same way regardless of whether it runs on Azure or Azure Stack. - Infrastructure as a Service (IaaS)

Infrastructure as a Service abstracts hardware (server, storage, and network infrastructure) into a pool of computing, storage, and connectivity capabilities that are delivered as services for a usage-based (metered) cost. IaaS services allow you to build and run server-based IT workloads in the cloud, rather than in your on-premises datacenter. IaaS services typically consist of an IT workload that runs on virtual machines that is transparently connected to your on-premises network. Your on-premises users will not notice the difference. IaaS is one of the most common cloud deployment patterns to date. It eliminates the need for capital expense budgets and reduces the time between purchasing and deployment to almost nothing. In addition, it is most similar to how IT operates today. - Platform as a Service (PaaS)

Platform as a Service delivers application execution services, such as application runtime, storage, and integration, for applications written for a prespecified development framework. In a PaaS deployment model, enterprises are focusing on deploying their application code into PaaS services. PaaS provides an efficient and agile approach to operate scale-out applications in a predictable and cost-effective manner. Service levels and operational risks are shared because the consumer must take responsibility for the stability, architectural compliance, and overall operations of the application while the provider delivers the platform capability (including the infrastructure and operational functions) at a predictable service level and cost. - Software as a Service (SaaS)

Software as a Service delivers business processes and applications, such as CRM, collaboration, and email, as standardized capabilities for a usage-based cost at an agreed, business-relevant service level. SaaS provides significant efficiencies in cost and delivery in exchange for minimal customization and represents a shift of operational risks from the consumer to the provider. All infrastructure and IT operational functions are abstracted away from the consumer. IT departments need only to take care of provisioning users and data and perhaps integrating the application with Single SignOn.

The chart below shows the different responsibilities for IaaS, PaaS, and SaaS.

Most enterprises developed already or started developing new modern cloud applications. Those applications have been designed for the cloud right from the beginning, and they make use of PaaS offerings such as Azure Web Apps, Mobile Apps, Logic Apps, SQL Databases, Stream Analytics, and HD Insight, among others. But there is also a huge amount of traditional enterprise IT that can benefit from the cloud as well without re-architecting existing applications.

Large enterprises are running hundreds or thousands of applications running perhaps on tens of thousands of virtual machines. Key questions that need to be answered are: Which applications could be moved? How could they be moved? How to prioritize? How does it affect the business?

Therefore, it is important to create a well-attributed catalog of applications managed by IT. The relative importance of attributes such as business criticality, amount of integrations points, performance requirements, etc., can be weighted and a prioritized list can be built. You can think about those characteristics top-down or bottom-up. Top-down describes where each application should go to; bottom-up describes where it can go.

The top assessment first evaluates the security and compliance aspects. Then it assesses the complexity, interfaces, authentication, data structure, latency requirements, and other aspects of the application architecture. Next are operational requirements, service levels, maintenance windows, monitoring, and others. The result is a score that reflects the relative difficulty to migrate the applications. Furthermore, the financial benefits of the application such as operational efficiency, total cost of ownership, return on investment, and others have to be taken into account. The seasonality, required scalability, and elasticity of the application need to be considered and finally business continuity and resilience requirements that the application might have. With this analysis you can figure out the applications that have the highest potential value and are better suited for migrations.

Analyzing the applications from a bottom-up perspective is aimed at providing a view into the eligibility, at a technical level of an application to migrate. Evaluated requirements are typically maximum memory, number of cores, operating system and data disk space, network interface cards, network and IP settings, load balancing, clustering, versions of operating systems, databases, middleware products, and web servers, among others.

Another aspect is the cloud platform, IaaS, PaaS, SaaS that the application should be migrated to. Many enterprise organizations take a three-step approach to cloud adoption. The first priority is to take advantage of SaaS productive workloads such as Office 365. The second priority is to base new modern cloud applications on PaaS (Azure SQL databases, Azure Web Apps, Logic Apps, Mobile Apps, etc.). The focus is on functionality rather than infrastructure. The third priority is moving existing applications to IaaS virtual machines by using one of the two approaches:

- Lift and Shift: Existing virtual machines are shifted to the cloud.

- Build in the cloud: applications are prebuilt in Azure, and traditional methods are used to back up and restore data.

To maximize efficiency, organizations are intending to use the higher level services of Azure wherever possible. Even though migration to Azure is the goal, retaining core network services in traditional on-premises datacenters will be necessary for the near future and results in a Hybrid Cloud.

This guide is focusing on getting Azure ready to use for PaaS and IaaS and provides best practices how to migrate, manage, and operate IaaS-based workloads in Azure. For further details about migration planning, please refer to this free ebook: https://info.microsoft.com/enterprise-cloud-strategy-ebook.html.

Preparing and training IT staff for the cloud

In order for IT staff to function as change agents supporting current and emerging cloud technologies, their buy-in for the use and integration of these technologies is needed. For this, staff need three things:

- An understanding of their roles and of any changes to their current position

- Time and resources to explore the technologies

- An understanding of the business case for the technologies

At each evolutionary phase during the history of the IT industry, the most notable industry changes are often marked by the changes to staff roles. During the transition from mainframes to the client/server model, the role of the computer operator largely disappeared, replaced by the system administrator. When the age of virtualization arrived, the requirement for individuals working with physical servers diminished, replaced with a need for virtualization specialists. Similarly, as institutions shift to cloud computing, roles will likely change again. For example, datacenter specialists might be replaced with cloud financial analysts. Even in cases where IT job titles have not changed, the daily work roles have evolved significantly.

IT staff members may feel anxious about their roles and positions as they realize that a different set of skills is needed for the support of cloud solutions. But agile employees who explore and learn new cloud technologies don't need to have that fear. They can lead the adoption of cloud services and help the organization understand and embrace the associated changes. Typical mappings of roles are:

Microsoft and partners offer a variety of options for all audiences to develop their skills with Microsoft Azure services.

- Microsoft Virtual Academy (https://mva.microsoft.com/product-training/microsoft-azure) offers training from the people who helped to build Microsoft Azure. From the basic overview to deep technical training, IT staff will learn how to leverage Microsoft Azure for their business.

- Microsoft IT Pro Cloud Essentials (https://www.itprocloudessentials.com) is a free annual subscription that includes cloud services, education, and support benefits. IT Pro Cloud Essentials provides IT implementers with hands-on experience, targeted educational opportunities, and access to experts in areas that matter most to increase knowledge and create a path to career advancement.

- The Microsoft IT Pro Career Center (https://www.itprocareercenter.com) is a free online resource to help map your cloud career path. Learn what industry experts suggest for your cloud role and the skills to get you there. Follow a learning curriculum at your own pace to build the skills you need most to stay relevant.

We recommend turning knowledge of Microsoft Azure into official recognition with Microsoft Azure certification training and exams. (https://www.microsoft.com/en-us/learning/mcsd-azure-architect-certification.aspx).

Recommendations for moving to the cloud

Identify your drivers to move to the cloud and take a systematic approach.

| Catalog existing applications | To understand what applications should be moved, when and how, it's important to create a well-attributed catalog of applications managed by IT. Then, the relative importance of each attribute (say, business criticality or amount of system integration) can be weighted and the prioritized list can be built. |

| Define criteria for moving to or starting applications in the cloud | You should set priorities within your migration plan based on a combination of business factors, hardware/software factors, and other technical factors. Your business liaison team should work with the operations team and the business units involved to help establish a priority listing that is widely agreed upon. For sequencing the migration of your workloads, you should begin with less-complex projects and gradually increase the complexity after the less-complex projects have been migrated. |

| Architect core infrastructure components for cloud integration | You must account for the following elements when planning and implementing hybrid cloud scenarios.

|

| Acquire cloud development skills | You must develop competencies with cloud technologies and services even as those services evolve and change. Practically, this means that staff must have time to explore new technologies and that you may need to increase your investment in IT staff training. |

| Retool for adoption and change management | Rethink your IT service management and disaster recovery practices, as well as how a given cloud service integrates with your existing in-house technology infrastructure. Consider the usage of cloud-based IT service management solutions. |

| Take a systematic and disciplined approach to Security, Governance, Compliance | Invest in core capabilities within your organization that lead to secure environments:

|

Managing security, compliance and data privacy

Every business has different needs and every business will reap distinct benefits from cloud solutions. Still, customers of all kinds have the same basic concerns about moving to the cloud. They want to retain control of their data, and they want that data to be kept secure and private, all while maintaining transparency and compliance. What customers want from cloud providers is:

- Secure our data

While acknowledging that the cloud can provide increased data security and administrative control, IT leaders are still concerned that migrating to the cloud will leave them more vulnerable to hackers than their current in-house solutions. - Keep our data private

Cloud services raise unique privacy challenges for businesses. As companies look to the cloud to save on infrastructure costs and improve their flexibility, they also worry about losing control of where their data is stored, who is accessing it, and how it gets used. - Give us control

Even as they take advantage of the cloud to deploy more innovative solutions, companies are very concerned about losing control of their data. The recent disclosures of government agencies accessing customer data, through both legal and extra-legal means, make some CIOs wary of storing their data in the cloud. - Promote transparency

While security, privacy, and control are important to business decision-makers, they also want the ability to independently verify how their data is being stored, accessed, and secured. - Maintain compliance

As companies expand their use of cloud technologies, the complexity and scope of standards and regulations continue to evolve. Companies need to know that their compliance standards will be met, and that compliance will evolve as regulations change over time.

Working to keep customer data safe

Security design and operations

Secure cloud solutions are the result of comprehensive planning, innovative design, and efficient operations. Microsoft makes security a priority at every step, from code development to incident response.

- Design for security from the ground up

Azure code development adheres to Microsoft's Security Development Lifecycle (SDL). The SDL is a software development process that helps developers build more secure software and addresses security compliance requirements while reducing development cost. The SDL became central to Microsoft's development practices a decade ago and is shared freely with the industry and customers. It embeds security requirements into systems and software through the planning, design, development, and deployment phases. - Enhancing operational security

Azure adheres to a rigorous set of security controls that governs operations and support. Microsoft deploys combinations of preventive, defensive, and reactive controls including the following mechanisms to help protect against unauthorized developer and/or administrative activity:

- Tight access controls on sensitive data, including a requirement for two-factor smartcard-based authentication to perform sensitive operations.

- Combinations of controls that enhance independent detection of malicious activity.

- Multiple levels of monitoring, logging, and reporting.

In addition, Microsoft conducts background verification checks of certain operations personnel and limits access to applications, systems, and network infrastructure in proportion to the level of background verification.

- Assume breach

One key operational best practice that Microsoft uses to harden its cloud services is known as the "assume breach" strategy. A dedicated "red team" of software security experts simulates real-world attacks at the network, platform, and application layers, testing Azure's ability to detect, protect against, and recover from breaches. By constantly challenging the security capabilities of the service, Microsoft can stay ahead of emerging threats. - Incident management and response

Microsoft follows a five-step incident response process when managing both security and availability incidents for the Azure services. The goal for both types is to restore normal service security and operations as quickly as possible after an issue is detected and an investigation is started. The response is implemented using a five-stage process illustrated in the figure below, which shows the following activities: Detect, Assess, Diagnose, Stabilize, and Close. The Security Incident Response Team may move back and forth between diagnose and stabilize as the investigation progresses. - Detect

First indication of an event investigation - Assess

An on-call incident response team member assesses the impact and severity of the event. Based on evidence, the assessment may or may not result in further escalation to the security response team. - Diagnose

Security response experts conduct the technical or forensic investigation, and identify containment, mitigation, and workaround strategies. If the security team believes that customer data may have become exposed to an unlawful or unauthorized individual, parallel execution of the Customer Incident Notification process begins in parallel. - Stabilize, recover

The incident response team creates a recovery plan to mitigate the issue. Crisis containment steps such as quarantining impacted systems may occur immediately and in parallel with diagnosis. Longer term mitigations may be planned, which occur after the immediate risk has passed. - Close/post-mortem

The incident response team creates a post-mortem that outlines the details of the incident, with the intention to revise policies, procedures, and processes to prevent a reoccurrence of the event.

Infrastructure protection

Azure infrastructure includes hardware, software, networks, administrative and operations staff, and the physical datacenters that house it all. Azure addresses security risks across its infrastructure.

- Physical security

Azure runs in geographically distributed Microsoft facilities, sharing space and utilities with other Microsoft Online Services. Each facility is designed to run 24x7x365 and employs various measures to help protect operations from power failure, physical intrusion, and network outages. These datacenters comply with industry standards (such as ISO 27001) for physical security and availability. They are managed, monitored, and administered by Microsoft operations personnel. - Monitoring and logging

Centralized monitoring, correlation, and analysis systems manage the large amount of information generated by devices within the Azure environment, providing continuous visibility and timely alerts to the teams that manage the service. Additional monitoring, logging, and reporting capabilities provide visibility to customers. - Update management

Security update management helps protect systems from known vulnerabilities. Azure uses integrated deployment systems to manage the distribution and installation of security updates for Microsoft software. Azure uses a combination of Microsoft and third-party scanning tools to run OS, web application, and database scans of the Azure environment. - Antivirus and antimalware

Azure software components must go through a virus scan prior to deployment. Code is not moved to production without a clean and successful virus scan. In addition, Microsoft provides native antimalware on all Azure VMs. Microsoft recommends that customers run some form of antimalware or antivirus on all virtual machines (VMs). Customers can install Microsoft Antimalware for Cloud Services and Virtual Machines or another antivirus solution on VMs, and VMs can be routinely reimaged to clean out intrusions that may have gone undetected. - Penetration testing

Microsoft conducts regular penetration testing to improve Azure security controls and processes. Microsoft understands that security assessment is also an important part of our customers' application development and deployment. Therefore, Microsoft has established a policy for customers to carry out authorized penetration testing on their own—and only their own—applications hosted in Azure. - DDoS protection

Azure has a defense system against Distributed Denial-of-Service (DDoS) attacks on Azure platform services. It uses standard detection and mitigation techniques. Azure's DDoS defense system is designed to withstand attacks generated from outside and inside the platform.

Network protection

Azure networking provides the infrastructure necessary to securely connect VMs to one another and to connect on-premises datacenters with Azure VMs. Because Azure's shared infrastructure hosts hundreds of millions of active VMs, protecting the security and confidentiality of network traffic is critical. In the traditional datacenter model, a company's IT organization controls networked systems, including physical access to networking equipment. In the cloud service model, the responsibilities for network protection and management are shared between the cloud provider and the customer. Customers do not have physical access, but they implement the logical equivalent within their cloud environment through tools such as Guest operating system (OS) firewalls, Virtual Network Gateway configuration, and Virtual Private Networks.

- Network isolation

Azure is a multitenant service, meaning that multiple customers' deployments and VMs are stored on the same physical hardware. Azure uses logical isolation to segregate each customer's data from that of others. This provides the scale and economic benefits of multitenant services while rigorously preventing customers from accessing one another's data. - Virtual networks

A customer can assign multiple deployments within a subscription to a virtual network and allow those deployments to communicate with each other through private IP addresses. Each virtual network is isolated from other virtual networks. - VPN and ExpressRoute

Microsoft enables connections from customer sites and remote workers to Azure Virtual Networks using Site-to-Site and Point-to-Site VPNs. For even better performance, customers can use an optional ExpressRoute, a private fiber link into Azure datacenters that keeps their traffic off the Internet. - Encrypting communications

Built-in cryptographic technology enables customers to encrypt communications within and between deployments, between Azure regions, and from Azure to on-premises datacenters.

Data protection

Azure allows customers to encrypt data and manage keys, and safeguards customer data for applications, platform, system, and storage using three specific methods: encryption, segregation, and destruction.

- Data isolation

Azure is a multitenant service, meaning that multiple customers' deployments and virtual machines are stored on the same physical hardware. - Protecting data at rest

Azure offers a wide range of encryption capabilities, giving customers the flexibility to choose the solution that best meets their needs. Azure Disk Encryption is a capability that lets you encrypt your Windows and Linux IaaS virtual machine disks. Azure Disk Encryption leverages the industry-standard BitLocker feature of Windows and the DMCrypt feature of Linux to provide volume encryption for the OS and the data disks. The solution is integrated with Azure Key Vault to help you control and manage the disk encryption keys and secrets in your key vault subscription, while ensuring that all data in the virtual machine disks are encrypted at rest in your Azure storage. SQL Database TDE is based on SQL Server's TDE technology, which encrypts the storage of an entire database by using an industry-standard AES-256 symmetric key called the database encryption key. SQL Database protects this database encryption key with a service-managed certificate. All key management for database copying, Geo-Replication, and database restores anywhere in SQL Database is handled by the service. - Protecting data in transit

For data in transit, customers can enable encryption for traffic between their own VMs and end users. Azure protects data in transit, such as between two virtual networks. Azure uses industry-standard transport protocols such as TLS between devices and Microsoft datacenters, and within datacenters themselves. - Encryption

Customers can encrypt data in storage and in transit to align with best practices for protecting confidentiality and data integrity. For data in transit, Azure uses industrystandard transport protocols between devices and Microsoft datacenters and within datacenters themselves. You can enable encryption for traffic between your own virtual machines and end users. - Data redundancy

Customers may opt for in-country storage for compliance or latency considerations or out-of-country storage for security or disaster recovery purposes. Data may be replicated within a selected geographic area for redundancy. - Data destruction

When customers delete data or leave Azure, Microsoft follows strict standards for overwriting storage resources before reuse. As part of our agreements for cloud services such as Azure Storage, Azure VMs, and Azure Active Directory, we contractually commit to specific processes for the deletion of data.

Identity and access

Microsoft has strict controls that restrict access to Azure by Microsoft employees. Azure also enables customers to control access to their environments, data, and applications.

- Enterprise cloud directory

Azure Active Directory is a comprehensive identity and access management solution in the cloud. It combines core directory services, advanced identity governance, security, and application access management. Azure Active Directory makes it easy for developers to build policy-based identity management into their applications. Azure Active Directory Premium includes additional features to meet the advanced identity and access needs of enterprise organizations. Azure Active Directory enables a single identity management capability across on-premises, cloud, and mobile solutions.

- Multi-Factor Authentication

Microsoft Azure provides Multi-Factor Authentication (MFA). This helps safeguard access to data and applications and enables regulatory compliance while meeting user demand for a simple sign-in process for both on-premises and cloud applications. It delivers strong authentication via a range of easy verification options—phone call, text message, or mobile app notification—allowing users to choose the method they prefer. - Access monitoring and logging

Security reports are used to monitor access patterns and to proactively identify and mitigate potential threats. Microsoft administrative operations, including system access, are logged to provide an audit trail if unauthorized or accidental changes are made. Customers can turn on additional access monitoring functionality in Azure and use third-party monitoring tools to detect additional threats. Customers can request reports from Microsoft that provide information about user access to their environments.

Owning and controlling data

Customers will only use cloud providers in which they have great trust. They must trust that the privacy of their information will be protected, and that their data will be used in a way that is consistent with their expectations. Standards and processes that support Privacy by Design principles include the Microsoft Online Services Privacy Statement and the Microsoft Security Development Lifecycle. We then back those protections with strong contractual commitments to safeguard customer data, including offering EU Model Clauses (which provides terms covering the processing of personal information), and complying with international standards. Microsoft uses customer data stored in Azure only to provide the service, including purposes compatible with providing the service. Azure does not use customer data for advertising or similar commercial purposes.

Microsoft was the first major cloud service provider to make contractual privacy commitments that help ensure the privacy protections built into in-scope Azure services are strong. Among the many commitments that Microsoft supports are:

- EU Model Clauses

- US-EU Safe Harbor Framework and the US-Swiss Safe Harbor Program

- ISO/IEC 27018

Access to customer data by Microsoft personnel is restricted. Customer data is only accessed when necessary to support the customer's use of Azure. This may include troubleshooting aimed at preventing, detecting, or repairing problems affecting the operation of Azure and the improvement of features that involve the detection of, and protection against, emerging and evolving threats to the user (such as malware or spam). When granted, access is controlled and logged. Strong authentication, including the use of multifactor authentication, helps limit access to authorized personnel only. Access is revoked as soon as it is no longer needed.

Microsoft believes that customers should control their data whether stored on their premises or in a cloud service. We will not disclose Azure customer data to law enforcement except as a customer directs or where required by law. When governments make a lawful demand for Azure customer data from Microsoft, we strive to be principled, limited in what we disclose, and committed to transparency. In its commitment to transparency, Microsoft regularly publishes a Law Enforcement Requests Report that discloses the scope and number of requests we receive. Microsoft keeps customers informed about the processes to protect data privacy and security, including practices and policies. Microsoft also provides the summaries of independent audits of services, which helps customers pursue their own compliance.

Managing compliance and data privacy regulations

Microsoft invests heavily in the development of robust and innovative compliance processes. The Microsoft compliance framework for online services maps controls to multiple regulatory standards. This enables Microsoft to design and build services using a common set of controls, streamlining compliance across a range of regulations today and as they evolve in the future. Microsoft compliance processes also make it easier for customers to achieve compliance across multiple services and meet their changing needs efficiently. Together, security-enhanced technology and effective compliance processes enable Microsoft to maintain and expand a rich set of third-party certifications. These help customers demonstrate compliance readiness to their customers, auditors, and regulators. As part of its commitment to transparency, Microsoft shares third-party verification results with its customers.

Azure meets a broad set of international as well as regional and industry-specific compliance standards, such as ISO 27001, FedRAMP, SOC 1, and SOC 2. Azure's adherence to the strict security controls contained in these standards is verified by rigorous third-party audits that demonstrate Azure services work with and meet world-class industry standards, certifications, attestations, and authorizations.

Azure is designed with a compliance strategy that helps customers address business objectives and industry standards and regulations. The security compliance framework includes test and audit phases, security analytics, risk management best practices, and security benchmark analysis to achieve certificates and attestations. Microsoft Azure offers the following certifications for all in-scope services.

- Content Delivery and Security Association (CDSA)

- Criminal Justice Information Services (CJIS)

- Cloud Security Alliance (CSA) Cloud Controls Matrix

- EU Model Clauses

- US Food and Drug Administration (FDA) Code of Federal Regulations (CFR) Title 21 P 11

- Federal Risk and Authorization Management Program (FedRAMP)

- Family Educational Rights and Privacy Act (FERPA)

- Federal Information Processing Standard (FIPS) Publication 140-2

- Health Insurance Portability and Accountability Act (HIPAA)

- Information Security Registered Assessors Program (IRAP)

- ISO/IEC 27018

- ISO/IEC 27001/27002:2013

- Multi-Level Protection Scheme (MLPS)

- Multi-Tier Cloud Security Standard for Singapore (MTCS SS)

- Payment Card Industry (PCI) Data Security Standards (DSS)

- Service Organization Control (SOC) reporting framework for both SOC 1 Type 2 and SOC 2 Type 2.

- Trusted Cloud Service certification developed by the China Cloud Computing Promotion and Policy Forum (CCCPPF)

- UK Government G-Cloud

Azure Security Center

Security Center helps you prevent, detect, and respond to threats with increased visibility into and control over the security of your Azure resources. It provides integrated security monitoring and policy management across your Azure subscriptions, helps detect threats that might otherwise go unnoticed, and works with a broad ecosystem of security solutions. Security Center delivers easy-to-use and effective threat prevention, detection, and response capabilities that are built in to Azure. Key capabilities are:

Prevent:

- Monitors the security state of your Azure resources

- Defines policies for your Azure subscriptions and resource groups based on your company's security requirements, the types of applications that you use, and the sensitivity of your data

- Uses policy-driven security recommendations to guide service owners through the process of implementing needed controls

- Rapidly deploys security services and appliances from Microsoft and partners

Detect:

- Automatically collects and analyzes security data from your Azure resources, the network, and partner solutions like antimalware programs and firewalls

- Leverages global threat intelligence from Microsoft products and services, the Microsoft Digital Crimes Unit (DCU), the Microsoft Security Response Center (MSRC), and external feeds

- Applies advanced analytics, including machine learning and behavioral analysis

Respond:

- Provides prioritized security incidents/alerts

- Offers insights into the source of the attack and impacted resources

- Suggests ways to stop the current attack and help prevent future attacks

Microsoft US government cloud

Azure Government is a government-community cloud (GCC) designed to support strategic government scenarios that require speed, scale, security, compliance, and economics for US government organizations. It was developed based on Microsoft's extensive experience delivering software, security, compliance, and controls in other Microsoft cloud offerings such as Azure public, Office 365, Office 365 GCC, Microsoft CRM Online, etc.

In addition, Azure Government is designed to meet the higher level security and compliance needs for sensitive, dedicated, US Public Sector workloads found in regulations such as United States Federal Risk and Authorization Management Program (FedRAMP), Department of Defense Enterprise Cloud Service Broker (ECSB), Criminal Justice Information Services (CJIS) Security Policy, and Health Insurance Portability and Accountability Act (HIPAA).

Azure Government includes the core components of Infrastructure as a Service (IaaS) and Platform as a Service (PaaS). This includes infrastructure, network, storage, data management, identity management, and many other services.

Microsoft cloud in Germany

Starting in 2016, Microsoft will offer its cloud services Microsoft Azure, Office 365 and Dynamics CRM Online from within German datacenters. That alone wouldn't be really surprising or innovative, but the unique thing about this is that the keys (physical and logical) that control access to customer data in this cloud are held by a German company, Deutsche Telekom's subsidiary T-Systems, which will act as a Data Trustee. So Microsoft will have no access to customer data without approval and supervision by the Data Trustee.

All access rights are handled by a role-based access model, better known as RBAC. Those roles are based on functions (Reader, Owner, etc.) and/or on realms (server, mailboxes, resource groups, etc.). Microsoft has—in this new model—no rights at all to access customer data. Only for a special purpose like a support call from a customer will a temporary access be granted by the Data Trustee to the Microsoft engineer, and only for the specified area. After that time all access is revoked automatically. Microsoft has no way to grant that access to itself. And of course there is a logging of this process to an area where Microsoft has no access, too. In addition, the Data Trustee is escorting the session and watching the engineer at work.

That RBAC is also in place for physical access to the datacenters. The Data Trustee has to approve the visit and will escort Microsoft or any of its subcontractors at any time during the visit. For all those cases where Microsoft could come in contact with customer data, it needs a reason related to operation of the services (incident, support case), a well-defined area of access, and a well-defined time period, and only then the trustee will grant access.

Customer data is only stored in the German datacenters. Data exchange between the two Azure regions in Germany (Germany Central and Germany Northeast) is handled by a dedicated network line leased from a German provider, just to make sure that no data is accidently routed outside of Germany. There is no additional replication or backup to other regions; even Azure Active Directory is only replicated between those two German Azure regions.

For encryption and securing data traffic between client applications and cloud servers, Microsoft relies on the nationally recognized certification authority of Bundesdruckerei GmbH, D-TRUST. This ensures customers of Microsoft Cloud Germany that their data is protected by the latest encryption technologies available in the market. With the safety concepts of Bundesdruckerei, users and servers can be reliably authenticated to ensure encrypted traffic.

Cloud security recommendations for enterprise architects

Although Microsoft is committed to the privacy and security of your data and applications in the cloud, customers must take an active role in the security partnership. Ever-evolving cybersecurity threats increase the requirements for security rigor and principles at all layers for both on-premises and cloud assets. Enterprise organizations are better able to manage and address concerns about security in the cloud when they take a systematic approach. Moving workloads to the cloud shifts many security responsibilities and costs to Microsoft, freeing your security resources to focus on the critically important areas of data, identity, strategy, and governance.

Your responsibility for security is based on the type of cloud service. The chart summarizes the balance of responsibility for both Microsoft and the customer.

| Develop cloud security policies | Policies enable you to align your security controls with your organization's goals, risks, and culture. Policies should provide clear, unequivocal guidance to enable good decisions by all practitioners.

|

| Manage continuous threats | The evolution of security threats and changes requires comprehensive operational capabilities and ongoing adjustments. Proactively manage this risk.

|

| Manage continuous innovation | The rate of capability releases and updates from cloud services requires proactive management of potential security impacts.

|

| Contain risk by assuming breach | When planning security controls and security response processes, assume an attacker has compromised other internal resources such as user accounts, workstations, and applications. Assume an attacker will use these resources as an attack platform. Modernize your containment strategy by:

|

| Least privilege admin model | Apply least-privilege approaches to your administrative model, including:

|

| Harden security dependencies | Security dependencies include anything that has administrative control of an asset. Ensure that you harden all dependencies at or above the security level of the assets they control. Security dependencies for cloud services commonly include identity systems, on-premises management tools, administrative groups and accounts, and workstations where these accounts logon. |

| Use strong authentication | Use credentials secured by hardware or Multi-Factor Authentication (MFA) for all identities with administrative privileges. This mitigates risk of stolen credentials being used to abuse privileged accounts. |

| Use dedicated admin accounts and workstations | Separate high-impact assets from highly prevalent Internet browsing and email risks:

|

| Enforce stringent security standards | Administrators control significant numbers of organizational assets. Rigorously measure and enforce stringent security standards on administrative accounts and systems. This includes cloud services and onpremises dependencies such as Active Directory, identity systems, management tools, security tools, administrative workstations, and associated operating systems. |

| Monitor admin accounts | Closely monitor the use and activities of administrative accounts. Configure alerts for activities that are high impact as well as for unusual or rare activities. |

| Educate and empower admins | Educate administrative personnel on likely threats and their critical role in protecting their credentials and key business data. Administrators are the gatekeepers of access to many of your critical assets. Empowering them with this knowledge will enable them to be better stewards of your assets and security posture. |

| Establish information protection priorities | The first step to protecting information is identifying what to protect. Develop clear, simple, and well-communicated guidelines to identify, protect, and monitor the most important data assets anywhere they reside. |

| Protect High Value Assets (HVAs) | Establish the strongest protection for assets that have a disproportionate impact on the organization's mission or profitability. Perform stringent analysis of HVA lifecycle and security dependencies, and establish appropriate security controls and conditions. |

| Find and protect sensitive assets | Identify and classify sensitive assets. Define the technologies and processes to automatically apply security controls. |

| Set organizational minimum standards | Establish minimum standards for trusted devices and accounts that access any data assets belonging to the organization. This can include device configuration compliance, device wipe, enterprise data protection capabilities, user authentication strength, and user identity. |

| Establish user policy and education | Users play a critical role in information security and should be educated on your policies and norms for the security aspects of data creation, classification, compliance, sharing, protection, and monitoring. |

| Use strong authentication | Use credentials secured by hardware or Multi-Factor Authentication (MFA) for all identities to mitigate the risk that stolen credentials can be used to abuse accounts.

|

| Manage trusted and compliant devices | Establish, measure, and enforce modern security standards on devices that are used to access corporate data and assets. Apply configuration standards and rapidly install security updates to lower the risk of compromised devices being used to access or tamper with data. |

| Educate, empower, and enlist users | Users control their own accounts and are on the front line of protecting many of your critical assets. Empower your users to be good stewards of organizational and personal data. At the same time, acknowledge that user activities and errors carry security risks that can be mitigated but never completely eliminated. Focus on measuring and reducing risks from users.

|

| Monitor for account and credential abuse | One of the most reliable ways to detect abuse of privileges, accounts, or data is to detect anomalous activity of an account.

|

| Secure applications that you acquire |

|

| Follow the Security Development Lifecycle (SDL) | Software applications with source code you develop or control are a potential attack surface. These include PaaS apps, PaaS apps built from sample code in Azure (such as WordPress sites), and apps that interface with Office 365. Follow code security best practices in the Microsoft Security Development Lifecycle (SDL) to minimize vulnerabilities and their security impact. See www.microsoft.com/sdl. |

| Update your network security strategy and architecture for cloud computing | Ensure your network architecture is ready for the cloud by updating your current approach or taking the opportunity to start fresh with a modern strategy for cloud services and platforms. Align your network strategy with your:

Your design should address securing communications:

|

| Optimize with cloud capabilities | Cloud computing offers uniquely flexible network capabilities as topologies are defined in software. Evaluate the use of these modern cloud capabilities to enhance your network security auditability, discoverability, and operational flexibility. |

| Manage and monitor network security | Ensure your processes and technology capabilities are able to distinguish anomalies and variances in configurations and network traffic flow patterns. Cloud computing utilizes public networks, allowing rapid exploitation of misconfigurations that should be avoided or rapidly detected and corrected.

|

| Virtual operating system | Secure the virtual host operating system (OS) and middleware running on virtual machines. Ensure that all aspects of the OS and middleware security meet or exceed the level required for the host, including:

|

| Virtual OS management tools | System management tools have full technical control of the host operating systems (including the applications, data, and identities), making these a security dependency of the cloud service. Secure these tools at or above the level of the systems they manage. These tools typically include:

|

Azure enterprise administration

The Azure Enterprise Agreement portal allows large enterprise customers of Azure to manage Azure subscriptions and associated licensing information from a central portal. Enterprise Agreement (EA) customers can add Azure to their EA by making an upfront monetary commitment to Azure. That commitment is consumed throughout the year by using any combination of the wide variety of cloud services Azure offers from its global datacenters. Within a given enterprise enrollment, Microsoft Azure has several roles that individuals play. The Enterprise Administrator has the ability to add or associate Accounts and Departments to the Enrollment, can view usage data across all Accounts and Departments, and is able to see the monetary commitment balance associated to the Enrollment. There is no limit to the number of Enterprise Administrators on an Enrollment.

Departments can be leveraged if an additional level to structure the Accounts and Subscriptions is needed. Cost center and Start/End date can be added as an attribute to the Department. Department Administrators can manage Department properties, manage accounts under the department they administer, download usage details, and view monthly Usage and Charges associated to their Department if the Enterprise Administrator has granted permission to do so. The Account Owner can add Subscriptions for their Account, update the Service Administrator and Co-Administrator for an individual Subscription, view usage data for their Account, and view Account charges if the Enterprise Administrator has provided access. Account Owners will not have visibility of the monetary commitment balance unless they also have Enterprise

Administrator rights. The Service Administrator and up to 200 Co-Administrators per

Subscription have the ability to access and manage Subscriptions and development projects within the classic Azure Management Portal. Service Administrators do not have access to the Enterprise Portal unless they also have one of the other two roles. The Resource Group Administrators manage a group of resources within a subscription that collectively provide a service and share a lifecycle: single project or service focused.

The primary tools that are used by these roles are:

| Enterprise Administrator | |

| Departmental Administrator | |

| Account Owner | |

| Service Administrator | |

| Co-Administrator | |

| Resource Group Administrator |

Understanding Azure subscriptions

Initially, a subscription was the administrative security boundary of Microsoft Azure. With the advent of the Azure Resource Management (ARM) model, a subscription now has two administrative models: Azure Service Management and Azure Resource Management. With ARM, the subscription is no longer needed as an administrative boundary. ARM provides a more granular Role-Based Access Control (RBAC) model for assigning administrative privileges at the resource level. Using RBAC, you can segregate duties within your team and grant only the amount of access to users that they need to perform their jobs. Access is granted by assigning the appropriate RBAC role to users, groups, and applications at a certain scope. The scope of a role assignment can be a subscription, a resource group, or a single resource. A role assigned at a parent scope also grants access to the children contained within it. For example, a user with access to a resource group can manage all the resources it contains, like websites, virtual machines, and subnets.

The RBAC role that is assigned dictates what resources the user, group, or application can manage within that scope. Azure RBAC has three basic roles that apply to all resource types:

- Owner has full access to all resources including the right to delegate access to others.

- Contributor can create and manage all types of Azure resources but can't grant access to others.

- Reader can view existing Azure resources.

The rest of the RBAC roles in Azure allow management of specific Azure resources. For example, the Virtual Machine Contributor role allows users to create and manage virtual machines. It does not give them access to the virtual network or the subnet that the virtual machine connects to.

A subscription additionally forms the billing unit. Services charges are accrued to the subscription. As part of the new Azure Resource Management model, it is also possible to roll up costs. A standard naming convention for Azure resource object types can be used to manage billing across projects teams, business units, or other desired view. See section 9.4 for further details.

A subscription is also a logical limit of scale by which resources can be allocated. These limits include hard and soft caps of various resource types (see https://azure.microsoft.com/en-us/documentation/articles/azure-subscription-service-limits/). Scalability is a key element for understanding how the subscription strategy will account for growth as consumption increases. If you want to raise the limit above the Default Limit, you can open an online customer supportrequest at no charge.

Every Azure subscription has a trust relationship with an Azure Active Directory instance (see section 6 for further details). This means that it trusts that directory to authenticate users, services, and devices. Multiple subscriptions can trust the same directory, but a subscription trusts only one directory.

This trust relationship that a subscription has with a directory is unlike the relationship that a subscription has with all other resources in Azure (websites, databases, and so on), which are more like child resources of a subscription. If a subscription expires, then access to those other resources associated with the subscription also stops. But the directory remains in Azure, and you can associate another subscription with that directory and continue to manage the directory users.

As a best practice, you should sign up for Azure as an organization and use a work or school account to manage resources in Azure. Work or school accounts are preferred because they can be centrally managed by the organization that issued them, they have more features than Microsoft accounts, and they are directly authenticated by Azure Active Directory. The same account provides access to other Microsoft online services that are offered to businesses and organizations, such as Office 365 or Microsoft Intune. If you already have an account that you use with those other properties, you likely want to use that same account with Azure.

The important point here is that Azure subscription admins and Azure AD directory admins are two separate concepts. Azure subscription admins can manage resources in Azure. Directory admins can manage properties in the directory. A person can be in both roles, but this isn't required.

Managing Azure subscriptions

In an Enterprise Environment it is key to set up Azure subscriptions in a way that ensures they support the requirements and fulfil the needs for reporting, segregation, and management today and the future. It is also important to minimize migration of resources between subscriptions because of subscription reorganizations. There are several motivations for using multiple Azure subscriptions. The most common ones are:

- Project-based billing and chargeback

There is a desire that individual projects get their own Azure bills. Today within Azure the lowest level of cost aggregation is at the subscription level. Overcoming this constraint is possible by using third-party tools with additional billing and chargeback capabilities or by creating an individual solution with the APIs described in section 9. - Reuse of shared infrastructure

Some applications and services will be dependent on components of shared infrastructure. The most popular scenario is sharing a common VPN to on-premises infrastructure. Today Azure imposes a constraint that a VPN is tied to a specific Virtual Network, which in turn is allocated to a specific Subscription. It is possible to connect different Azure Virtual Networks together with additional Site to Site VPNs. The Azure Virtual Networks can be in the same or in different subscriptions. One ExpressRoute circuit can connect multiple Azure Virtual Networks across multiple subscriptions as long as the location of the Virtual Networks is connected with the ExpressRoute circuit. These constraints drive project teams in a direction for a shared subscription model in a lot of cases. - Security least privilege

A subscription is the security boundary such that an administrator on a subscription can modify any resources within that subscription. If subscriptions are shared across teams, then these administrators have greater rights than they need to perform their role, increasing the security risk profile. Role-Based Access Control (RBAC) gives the possibility to assign roles and rights on Resource Group and Resource level. There are currently more than 20 Built-in roles available that can be assigned to users and groups to allow a granular assignment of permissions within an Azure subscription. This reduces the amount of required subscriptions significantly. For creating additional custom RBAC roles please refer to https://azure.microsoft.com/en-us/documentation/articles/role-basedaccess-control-custom-roles.

One of the most critical items in the process of designing a subscription is assessing your current environment and needs. Specifically, it is important to have a thorough understanding of the following aspects:

Identify business requirements

- Availability

- Recoverability

- Performance

Identify technical requirements

- Is network connectivity a shared resource or dedicated to single use or group?

- Are there Active Directory requirements?

- Do you need to consider clustering, identity, or management tools?

Security requirements

- Who are the subscription administrators?

- Are the appropriate network connectivity and identity requirements being deployed?

- Have you implemented a least privilege administrative model?

Scalability requirements

- What are the growth plans?

- How will limited resources be allocated?

- How will the model evolve over time considering additional users, shared access, and resource limits?

Adding network connectivity (whether using a site-to-site VPN or a dedicated ExpressRoute connection) brings additional considerations to the subscription requirements discussion. For more information about network design, see section 5 in this document.

The subscription is a required container to hold a virtual network, and often networking is a shared resource within an enterprise. Site-to-site VPNs and ExpressRoute circuits require defining IP address ranges that do not overlap with onpremises ranges. Site-to-site VPN connectivity requires setting up and configuring a public-facing gateway and VPN services at the corporate edge. ExpressRoute connectivity is through a private connection from an on-premises datacenter to Azure through a service provider's private network. Routing and firewall configurations are typically necessary when enabling connectivity.

If multiple virtual networks are to share a single enterprise ExpressRoute connection, essentially there is no network isolation between those networks. In this case, any separation the subscription design may try to define is eliminated and must be achieved through subnet layer Network Security Groups (NSGs). When the virtual networks are attached to the same

ExpressRoute circuit, they are essentially a single routing domain. A subscription hosting only PaaS services could have no virtual network at all, and the design limitations discussed above would not apply.

The following diagram shows a robust enterprise Azure enrollment. There are multiple subscriptions, one of which is a "Tier 0" subscription used to host shared resources such as domain controllers and other sensitive roles when extending an on-premises Active Directory forest to Azure.

This is configured as a separate subscription to ensure that only administrators with domain administrator level privileges are able to exert administrative control over these sensitive servers through Azure subscriptions, while still allowing server administrators to manage virtual machines in other subscriptions.

QA and production networks share the same dedicated ExpressRoute circuit to on-premises resources. They are separated into distinct subscriptions to allow separation of access and to allow the QA subscription to scale on its own without impacting production.

This model will scale based on need. Second, third, and subsequent QA and production subscriptions can be added to this design without significant impact on operations. Those subscriptions can be managed by the project teams they belong to. The same scalability applies to network bandwidth—the circuit can be used until its limits are reached without any artificial limitations forcing additional purchases.

A typical subscription model will be based on a mixed model of Shared subscriptions and Project subscriptions (or business department subscriptions) driven by particular project requirements.

Defining naming conventions

When naming the Microsoft Azure subscription, it is a recommend practice to be verbose. Try using the following format or a format that has been agreed on by the stakeholders of the company.

<Company> <Department (optional)> <Product Line (optional)> <Environment>

- Company, in most cases, would be the same for each subscription. However, some companies may have child companies within the organizational structure. These companies may be managed by a central IT group, in which case they could be differentiated by having both the parent company name and child company name.

- Department is a name within the organization where a group of individuals work. This item within the namespace is optional. This is because some companies may not need to drill into such detail due to their size. The company may want to use a different identifier.

- Product line is a specific name for a product or function that is performed from within the department. As with the department namespace, this area is optional and can be swapped out as needed.

- Environment is the name that describes the deployment lifecycle of the applications or services, such as Dev, Lab, or Prod.

What you are trying to accomplish with a naming convention is to put together a meaningful name about the particular subscription and how it is represented within the company. Many organizations will have more than one subscription, which is why it is important to have a naming convention and use it consistently when creating subscriptions.

Recommendations for Azure enterprise administration

| Limit the number of administrative users | Assign a minimum number of users as Subscription Administrators and/or Co-administrators. |

| Use Role-Based Access | Use Azure Resource Management RBAC whenever possible to control the amount of access that administrators have, and log what changes are made to the environment. |

| Use work accounts | You should sign up for Azure as an organization and use a work or school account to manage resources in Azure. Do not allow the use of existing personal Microsoft Accounts. |

| Define naming conventions | Assign meaningful names to your Azure subscriptions according to defined naming conventions. |

| Use Tier 0 subscription | Use Tier 0 subscription to host shared resources, such as domain controllers and other sensitive roles, and limit the privileges to access it. |

| Use project subscriptions | Use decentralized project subscriptions. Delegate management of those subscriptions to the responsible project teams. |

| Separate production from QA | Separate QA environments into distinct subscriptions to allow separation of access and to allow the QA subscription to scale on its own without impacting production. |

Integrating Azure into the corporate network

Within Azure, there is the concept of virtual networks, subnets within the virtual networks, and the network gateways that allow connectivity between virtual networks and on-premises networks.

Virtual networks can be used to allow isolated network communication within the Azure environment or establish cross-premises network communication between an organization's network infrastructure and Azure. By default, when virtual machines are created and connected to Azure Virtual Network, they are allowed to route to any subnet within the virtual network, and outbound access to the Internet is provided by Azure's Internet connection.

A fundamental first step in creating services within Microsoft Azure is establishing a Virtual Network. To establish a virtual private network within Azure, you must create a minimum of one virtual network. Each virtual network must contain an IP address space and a minimum of one subnet that leverages all or part of the virtual network address space.

Choosing the right connectivity option

To establish remote network communications to on-premises or other virtual networks, a gateway subnet must be allocated for the virtual network and a virtual network gateway must be added to it. To enable cross-premises connectivity, a Virtual Network must attach a virtual network gateway.

Currently, there are three types of gateways that can be deployed:

- Static routing gateway for Site-to-Site (S2S) VPN connections (basic, standard, and high performance)

- Dynamic routing gateway for Site-to-Site (S2S) VPN connections (basic, standard, and high performance)

- Dynamic Routing ExpressRoute gateway (standard and high performance)

The type of gateway determines the cross-premises connectivity capabilities, the performance, and the features that are offered. Static and dynamic gateways are used when establishing Point-to-Site (P2S) and Site-to-Site (S2S) VPN connections where the cross-premises connectivity leverages the Internet for the transport path. ExpressRoute gateways are designed for high-speed, private, cross-premises connectivity where the traffic flows across dedicated circuits and not the Internet.

A static routing gateway uses policy-based VPNs. Policy-based VPNs encrypt and route packets through an interface based on a customer-defined policy. Static gateways are for establishing low-cost connections to a single virtual network in Azure.

Dynamic routing gateways use route-based VPNs. Route-based VPNs depend on a tunnel interface specifically created for forwarding packets. Any packet arriving at the tunnel interface is forwarded through the VPN connection. Dynamic gateways are used to establish low-cost connections to an on-premises environment or to connect multiple virtual networks for routing purposes in Azure. In addition, it supports Border Gateway Protocol (BGP) Routing (see https://azure.microsoft.com/en-us/documentation/articles/role-based-access-control-custom-roles/).

ExpressRoute gateways are always dynamic routing gateways that support BGP routing protocols. ExpressRoute gateways are used for connecting on-premises environments to Azure over high-speed private connections.

For Site-to-Site gateways, an IPsec/IKE VPN tunnel is created between the virtual networks and the on-premises sites by using Internet Key Exchange (IKE) protocol handshakes. For

ExpressRoute, the gateways advertise the prefixes by using the BGP in your virtual networks via the peering circuits. The gateways also forward packets from your ExpressRoute circuits to your virtual machines inside your virtual networks.

Each gateway has a limited number of other gateway connections that it can establish. The connection model between gateways dictates how far you can route within Azure. There are three distinct models that you can leverage to connect multiple virtual networks to one another:

Mesh

Hub and Spoke

Daisy-Chain

In the Mesh approach, every virtual network can talk to every other virtual network with a single hop. Therefore, this approach does not require you to define multiple hop routing. Challenges with this approach include the rapid consumption of gateway connections, which limits the size of the virtual network routing capability.

In the Hub and Spoke approach a virtual machine on vNet1 will be able to communicate to a virtual machine on vNet2, vNet3, vNet4, or vNet5. A virtual machine on vNet2 could talk to virtual machines on vNet1, but not a virtual machine on vNet3, vNet4, or vNet5. This is due to the default single hop isolation of the virtual network in this configuration.

In a Daisy-Chain approach, a virtual machine on vNet1 can communicate to a virtual machine on vNet2, but not vNet3, vNet4, or vNet5. A virtual machine on vNet2 could talk to virtual machines on vNet1 and vNet3. The same virtual network single hop isolation applies.

Azure supports two types of connectivity options to connect customers' networks to Azure virtual networks: Site-to-Site VPN and ExpressRoute. Although Point-to-Site is another viable connectivity option, it is client-focused and is not specific to this section.

Site-to-Site VPN connections use VPN devices over public Internet connections to create a path to route traffic to a virtual network in a customer subscription. Traffic to the virtual network flows across an encrypted VPN connection, while traffic to the Azure public services flows over the Internet. It is not possible to create a Site-to-Site VPN connection that provides direct connectivity to the public Azure services via a public peering path. To provide multiple VPN connections to the virtual network, you must use multiple VPN devices connected to different sites. These relationships are depicted in the following diagram:

ExpressRoute connections use routers and private network paths to route traffic to Azure Virtual Network and, optionally, to the Azure public services. Private connections are made through a network provider by establishing an ExpressRoute circuit with a selected provider. The customer's router is connected to the provider's router, and the provider creates the ExpressRoute circuit to connect to the Azure Routers.